Artificial Intelligence Accountability Policy

Overview

NTIA issued a Request for Comment on AI Accountability Policy on April 13, 2023 (RFC).1 The RFC included 34 questions about AI governance methods that could be employed to hold relevant actors accountable for AI system risks and harmful impacts. It specifically sought feedback on what policies would support the development of AI audits, assessments, certifications, and other mechanisms to create earned trust in AI systems – which practices are also known as AI assurance.

To be accountable, relevant actors must be able to assure others that the AI systems they are developing or deploying are worthy of trust, and face consequences when they are not.2 The RFC relied on the NIST delineation of “trustworthy AI” attributes: valid and reliable, safe, secure and resilient, privacy-enhanced, explainable and interpretable, accountable and transparent, and fair with harmful bias managed.3 To be clear, trust and assurance are not products that AI actors generate. Rather, trustworthiness involves a dynamic between parties; it is in part a function of how well those who use or are affected by AI systems can interrogate those systems and make determinations about them, either themselves or through proxies.

AI assurance efforts, as part of a larger accountability ecosystem, should allow government agencies and other stakeholders, as appropriate, to assess whether the system under review

- has substantiated claims made about its attributes and/or

- meets baseline criteria for “trustworthy AI.”

The RFC asked about the evaluations entities should conduct prior to and after deploying AI systems; the necessary conditions for AI system evaluations and certifications to validate claims and provide other assurance; different policies and approaches suitable for different use cases; helpful regulatory analogs in the development of an AI accountability ecosystem; regulatory requirements such as audits or licensing; and the appropriate role for the federal government in connection with AI assurance and other accountability mechanisms.

Over 1,440 unique comments from diverse stakeholders were submitted in response to the RFC and have been posted to Regulations.gov.4 An NTIA employee read every comment. Approximately 1,250 of the comments were submitted by individuals in their own capacity. Approximately 175 were submitted by organizations or individuals in their institutional capacity. Of this latter group, industry (including trade associations) accounted for approximately 48%, nonprofit advocacy for approximately 37%, and academic and other research organizations for approximately 15%. There were a few comments from elected and other governmental officials.

Since the release of the RFC, the Biden-Harris Administration has worked to advance trustworthy AI in several ways. In May 2023, the Administration secured commitments from leading AI developers to participate in a public evaluation of AI systems at DEF CON 31.5 The Administration also secured voluntary commitments from leading developers of “frontier” advanced AI systems (“White House Voluntary Commitments”) to advance trust and safety, including through evaluation and transparency measures that relate to queries in the RFC.6 In addition, the Administration secured voluntary commitments from healthcare companies related to AI.7 Most recently, President Biden issued an Executive Order on Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence (“AI EO”), which advances and coordinates the Administration’s efforts to ensure the safe and secure use of AI; promote responsible innovation, competition, and collaboration to create and maintain the United States’ leadership in AI; support American workers; advance equity and civil rights; protect Americans who increasingly use, interact with, or purchase AI and AI-enabled products; protect Americans’ privacy and civil liberties; manage the risks from the federal government’s use of AI; and lead global societal, economic, and technical progress.8 Administration efforts to advance trustworthy AI prior to the release of the RFC in April 2023 include most notably the NIST AI Risk Management Framework (NIST AI RMF)9 and the White House Blueprint for an AI Bill of Rights (Blueprint for AIBoR).10

Federal regulatory and law enforcement agencies have also advanced AI accountability efforts. A joint statement from the Federal Trade Commission, the Department of Justice’s Civil Rights Division, the Equal Employment Opportunity Commission, and the Consumer Financial Protection Bureau outlined the risks of unlawfully discriminatory outcomes produced by AI and other automated systems and asserted the respective agencies’ commitment to enforcing existing law.11 Other federal agencies are examining AI in connection with their missions.12 A number of different Congressional committees have held hearings, and members of Congress have introduced bills related to AI.13 State legislatures across the country have passed bills that affect AI,14 and localities are legislating as well.15

The United States has collaborated with international partners to consider AI accountability policy. The U.S. – EU Trade and Technology Council (TTC) issued a joint AI Roadmap and launched three expert groups in May 2023, of which one is focused on “monitoring and measuring AI risks.”16 These groups have issued a list of 65 key terms, wherever possible unifying disparate definitions.17 Participants in the 2023 Hiroshima G7 Summit have worked to advance shared international guiding principles and a code of conduct for trustworthy AI development.18 The Organization for Economic Cooperation and Development is working on accountability in AI.19 In Europe, the EU AI Act – which includes provisions addressing pre-release conformity certifications for high-risk systems, as well as transparency and audit provisions and special provisions for foundation models20 or general purpose AI – has continued on the path to becoming law.21 The EU Digital Services Act requires audits of the largest online platforms and search engines,22 and a recent EU Commission delegated act on audits indicates that it is important in this context to analyze algorithmic systems and technologies such as generative models.23

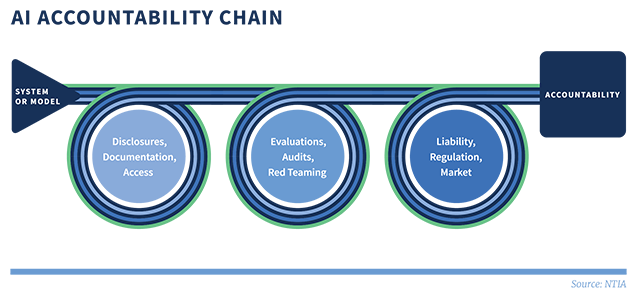

Multiple policy interventions may be necessary to achieve accountability. Take, for example, a policy promoting the disclosure to appropriate parties of training data details, performance limitations, and model characteristics for high-risk AI systems. Disclosure alone does not make an AI actor accountable. However, such information flows will likely be important for internal accountability within the AI actor’s domain and for external accountability as regulators, litigators, courts, and the public act on such information. Disclosure, then, is an accountability input whose effectiveness depends on other policies or conditions, such as the governing liability framework, relevant regulation, and market forces (in particular, customers’ and consumers’ ability to use the information disclosed to make purchase and use decisions). This report touches on how accountability inputs feed into the larger accountability apparatus and considers how these connections might be developed in further work.

Our final limitations on scope concern matters that are the focus of other federal government inquiries. Although NTIA received many comments related to intellectual property, particularly on the role of copyright in the development and deployment of AI, this Report is largely silent on intellectual property issues. Mitigating risks to intellectual property (e.g. infringement, unauthorized data transfers, unauthorized disclosures) are certainly recognized components of AI accountability.24 These issues are of ongoing consideration at the U.S. Patent and Trademark Office (USPTO)25 and at the U.S. Copyright Office.26 We look forward to working with these agencies and others on these issues as warranted to help ensure that AI accountability and related transparency, safety, and other considerations relevant to the broader digital economy and Internet ecosystem are represented.27

Similarly, the role of privacy and the use of personal data in model training are topics of great interest and significance to AI accountability. More than 90% of all organizational commenters noted the importance of data protection and privacy to trustworthy and accountable AI.28 AI can exacerbate risks to Americans’ privacy, as recognized by the Blueprint for an AI Bill of Rights and the AI EO.29 Privacy protection is not only a focus of AI accountability, but importantly privacy also needs to be considered in the development and use of accountability tools. Documentation, disclosures, audits, and other forms of evaluation can result in the collection and exposure of personal information, thereby jeopardizing privacy if not properly designed and executed. Stronger and clearer rules for the protection of personal data are necessary through the passage of comprehensive federal privacy legislation and other actions by federal agencies and the Administration. The President has called on Congress to enact comprehensive federal privacy protections.30

Finally, open-source AI models, AI models with widely available model weights, and components of AI systems generally are of tremendous interest and raise distinct accountability issues. The AI EO tasked the Secretary of Commerce with soliciting input and issuing a report on “the potential benefits, risks, and implications, of dual-use foundation models for which the weights are widely available, as well as policy and regulatory recommendations pertaining to such models,”31 and NTIA has published a Request for Comment for the purpose of informing that report.32

Requisites for AI Accountability: Areas of Significant Commenter Agreement

This section outlines significant commenter alignment around cross-cutting issues, many of which are covered in more depth later. Such issues include calibrating AI accountability policies to risk, assuring AI systems across their lifecycle, standardizing disclosures and evaluations, and increasing the federal role in supporting and/or requiring certain accountability inputs.

Developing Accountability Inputs: A Deeper Dive

This section dives deeper into these issues, organizing the discussion around three key ingredients of AI accountability: (1) information flow, including documentation of AI system development and deployment; relevant disclosures appropriately detailed to the stakeholder audience; and provision to researchers and evaluators of adequate access to AI system components; (2) AI system evaluations, including government requirements for independent evaluation and pre-release certification (or licensing) in some cases; and (3) government support for an accountability ecosystem that widely distributes effective scrutiny of AI systems, including within government itself.

Using Accountability Inputs

This section shows how accountability inputs intersect with liability, regulatory, and market-forcing functions to ensure real consequences when AI actors forfeit trust.

Learning From Other Models

This section surveys lessons learned from other accountability models outside of the AI space.

Recommendations

This section concludes with recommendations for government action.

Glossary of Terms

This section provides a glossary of terms used in this Report.

1 National Telecommunications and Information Administration (NTIA), AI Accountability Policy Request for Comment, 88 Fed. Reg 22433 (April 13, 2023) [hereinafter “AI Accountability RFC”].

2 See Claudio Novelli, Mariarosaria Taddeo, and Luciano Floridi, “Accountability in Artificial Intelligence: What It Is and How It Works,” (Feb. 7, 2023), AI & Society: Journal of Knowledge, Culture and Communication, (stating that AI accountability “denotes a relation between an agent A and (what is usually called) a forum F, such that A must justify A’s conduct to F, and F supervises, asks questions to, and passes judgment on A on the basis of such justification. . . . Both A and F need not be natural, individual persons, and may be groups or legal persons.”) (italics in original).

3 National Institute of Standards and Technology (NIST), Artificial Intelligence Risk Management Framework (AI RMF 1.0) (Jan. 2023), [hereinafter “NIST AI RMF”]. The later-adopted AI EO uses the term “safe, secure, and trustworthy” AI. Because safety and security are part of NIST’s definition of “trustworthy,” this Report uses the “trustworthy” catch-all. Other policy documents use “responsible” AI. See, e.g., Government Accountability Office (GAO), Artificial Intelligence: An Accountability Framework for Federal Agencies and Other Entities (GAO Report No. GAO-21-519SP), at 24 n.22 (Jun 30, 2021) (citing U.S. government documents using the term “responsible use” to entail AI system use that is responsible, equitable, traceable, reliable, and governable).

4 Comments in this proceeding are accessible through Regulations.gov, NTIA AI Accountability RFC (2023), with an index available linking commenter name with regulations.gov commenter number available at the Regulations.gov, NTIA AI RFC Commenter Name- Number Index page.

5 See The White House, FACT SHEET: Biden-Harris Administration Announces New Actions to Promote Responsible AI Innovation that Protects Americans’ Rights and Safety (May 4, 2023) (allowing “AI models to be evaluated thoroughly by thousands of community partners and AI experts to explore how the models align with the principles and practices outlined in the Biden-Harris Administration’s Blueprint for an AI Bill of Rights and AI Risk Management Framework”).

6 See The White House, FACT SHEET: Biden-Harris Administration Secures Voluntary Commitments from Leading Artificial Intelligence Companies to Manage the Risks Posed by AI (July 21, 2023); The White House, Ensuring Safe, Secure and Trustworthy AI (July 21, 2023) [hereinafter “First Round White House Voluntary Commitments”] (detailing the commitments to red-team models, sharing information among companies and the government, investment in cybersecurity, incentivizing third-party issue discovery and reporting, and transparency through watermarking, among other provisions); The White House, FACT SHEET: Biden-Harris Administration Secures Voluntary Commitments from Eight Additional Artificial Intelligence Companies to Manage the Risks Posed by AI (Sept. 12, 2023); The White House, Voluntary AI Commitments (September 12, 2023) [hereinafter “Second Round White House Voluntary Commitments”].

7 The White House, FACT SHEET: Biden-Harris Administration Announces Voluntary Commitments from Leading Healthcare Companies to Harness the Potential and Manage the Risks Posed by AI (December 14, 2023).

8 Executive Order No. 14110, Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence, 88 Fed. Reg. 75191 [hereinafter “AI EO”] (2023) at Sec. 2.

9 NIST AI RMF; see also U.S.-E.U. Trade and Technology Council (TTC), TTC Joint Roadmap on Evaluation and Measurement Tools for Trustworthy AI and Risk Management (Dec. 1, 2022), at 9 (“The AI RMF is a voluntary framework seeking to provide a flexible, structured, and measurable process to address AI risks prospectively and continuously throughout the AI lifecycle. […] Using the AI RMF can assist organizations, industries, and society to understand and determine their acceptable levels of risk. The AI RMF is not a compliance mechanism, nor is it a checklist intended to be used in isolation. It is law- and regulation-agnostic, as AI policy discussions are live and evolving.”)..

10 The White House, Blueprint for an AI Bill of Rights: Making Automated Systems Work for the American People (Oct. 2022), [hereinafter “Blueprint for AIBoR”].

11 See Rohit Chopra, Kristen Clarke, Charlotte A. Burrows, and Lina M. Khan, Joint Statement on Enforcement Efforts Against Discrimination and Bias in Automated Systems (April 25, 2023) [hereinafter “Joint Statement on Enforcement Efforts”]; Consumer Financial Protection Circular, 2023-03, Adverse action notification requirements and the proper use of the CFPB’s sample forms provided in Regulation B. See also, Consumer Financial Protection Bureau, CFPB Issues Guidance on Credit Denials by Lenders Using Artificial Intelligence (Sept. 2023); Equal Employment Opportunity Commission, Select Issues: Assessing Adverse Impact in Software, Algorithms, and Artificial Intelligence Used in Employment Selection Procedures Under Title VII of the Civil Rights Act of 1964 (May 18, 2023).

12 See, e.g., U.S. Department of Education Office of Educational Technology, Artificial Intelligence and the Future of Teaching and Learning: Insights and Recommendations (May 2023); Engler, infra note 359 (referring to initiatives by the U.S. Food and Drug Administration); U.S. Department of State, Artificial Intelligence (AI); U.S. Department of Health and Human Services, Trustworthy AI (TAI) Playbook (September 2021); U.S. Department of Homeland Security Science & Technology Directorate, Artificial Intelligence (September 2023).

13 See, e.g., Laurie A. Harris, Artificial Intelligence: Overview, Recent Advances, and Considerations for the 118th Congress, Congressional Research Service (Aug. 4, 2023), at 9-10; Anna Lenhart, Roundup of Federal Legislative Proposals that Pertain to Generative AI: Part II, Tech Policy Press (Aug. 9, 2023); see also, e.g., U.S. House of Representatives Committee on Oversight and Accountability Subcommittee on Cybersecurity, Information Technology, and Government Innovation, Advances in AI: Are We Ready For a Tech Revolution? (subcommittee hearing) (March 8, 2023); U.S. House of Representatives Committee on Science, Space, and Technology, Artificial Intelligence: Advancing Innovation Towards the National Interest (committee hearing) (June 22, 2023); U.S. Senate Committee on the Judiciary Subcommittee on Privacy, Technology, and the Law, Oversight of A.I.: Rules for Artificial Intelligence (committee hearing) (May 16, 2023).

14 See Katrina Zhu, The State of State AI Laws: 2023, Electronic Privacy Information Center (Aug. 3, 2023), (providing an inventory of state legislation).

15 See, e.g., The New York City Council, A Local Law to Amend the Administrative Code of the City of New York, in Relation to Automated Employment Decision Tools, Local Law No. 2021/144 (Dec. 11, 2021).

16 See The White House, FACT SHEET: U.S.-EU Trade and Technology Council Deepens Transatlantic Ties (May 31, 2023).

17 See The White House, U.S.-EU Joint Statement of the Trade and Technology Council (May 31, 2023); supra note 9, U.S.-E.U. Trade and Technology Council (TTC).

18 The White House, G7 Leaders’ Statement on the Hiroshima AI Process (Oct. 30, 2023); Hiroshima Process International Guiding Principles for Organizations Developing Advanced AI System (Oct. 30, 2023); Hiroshima Process International Code of Conduct for Organizations Developing Advanced AI Systems (Oct. 30, 2023).

19 See, e.g., OECD ADVANCING ACCOUNTABILITY IN AI GOVERNING AND MANAGING RISKS THROUGHOUT THE LIFECYCLE FOR TRUSTWORTHY AI (Feb. 2023). See also United Nations, High-level Advisory Body on Artificial Intelligence (calling for “[g]lobally coordinated AI governance” as the “only way to harness AI for humanity, while addressing its risks and uncertainties, as AI-related applications, algorithms, computing capacity and expertise become more widespread internationally” and describing the mandate of the new High-level Advisory Body on Artificial Intelligence to “analysis and advance recommendations for the international governance of AI”).

20 We use the term “foundation model” to refer to models which are “trained on broad data at scale and are adaptable to a wide range of downstream tasks”, like “BERT, DALL-E, [and] GPT-3”. See Richi Bommasani et al., On the Opportunities and Risks of Foundation Models, arXiv (July 12, 2022).

21 See Presidency of the Council of the European Union, Note 5662/24, Analysis of the final compromise text with a view to agreement, Proposal for a Regulation of the European Parliament and of the Council laying down rules on artificial intelligence (Artificial Intelligence Act) and amending certain Union legislative acts (January 26, 2024) (containing the text of the proposed EU AI Act as agreed between the European Parliament and the Council of the European Union) [hereinafter “EU AI Act”]; European Parliament, Artificial Intelligence Act: Deal on Comprehensive Rules for Trustworthy AI, European Parliament News (Dec. 12, 2023).

22 See European Commission, Digital Services Act: Commission Designates First Set of Very Large Online Platforms and Search Engines (April 25, 2023).

23 See European Commission, Commission Delegated Regulation (EU) Supplementing Regulation (EU) 2022/2065 of the European Parliament and of the Council, by Laying Down Rules on the Performance of Audits for Very Large Online Platforms and Very Large Online Search Engines, (Oct. 20, 2023), at 2, 14.

24 See, e.g., NIST AI RMF at 16, 24 (recognizing that training data should follow applicable intellectual property rights laws, that policies and procedures should be in place to address risks of infringement of a third-party’s intellectual property or other rights); Hiroshima Process International Code of Conduct for Organizations Developing Advanced AI Systems, supra note 18 at 8 (calling on organizations to “implement appropriate data input measures and protections for personal data and intellectual property” and encouraging organizations “to implement appropriate safeguards, to respect rights related to privacy and intellectual property, including copyright-protected content.”).

25 The USPTO will clarify and make recommendations on key issues at the intersection of intellectual property and artificial intelligence. See AI EO Section 5.2. See also U.S. Patent and Trademark Office, Request for Comments Regarding Artificial Intelligence and Inventorship, 88 Fed. Reg. 9492 (Feb. 14, 2023); U.S. Patent and Trademark Office, Public Views on Artificial Intelligence and Intellectual Property Policy (Oct. 2020); U.S. Patent and Trademark Office, Artificial Intelligence.

26 See, e.g., U.S. Copyright Office, Notice of Inquiry and Request for Comments on Artificial Intelligence and Copyright, 88 Fed. Reg. 59942 (Aug. 30, 2023) [hereinafter “Copyright Office AI RFC”]; U.S. Copyright Office Comment at 2 (describing the Copyright Office’s ongoing work at the intersection of AI and copyright law and policy); U.S. Copyright Office, Copyright and Artificial Intelligence.

27 See U.S. Copyright Office Comment at 2 (“We are, however, cognizant that the policy issues implicated by rapidly developing AI technologies are bigger than any individual agency’s authority, and that NTIA’s accountability inquiries may align with our work.”); see also Copyright Office AI RFC at 59,944 n.22 (mentioning the U.S. Copyright Office’s consideration of AI in the regulatory context of the Digital Millenium Copyright Act rulemaking. By law, NTIA plays a consultation role in the rulemaking and has previously commented on petitions for exemptions that involve considerations of AI. See 17 U.S.C. § 1201(a)(1)(C); NTIA, Recommendations of the National Telecommunications and Information Administration to the Register of Copyrights in the Eight Triennial Section 1201 Rulemaking at 48-58 (Oct. 1, 2021).

28 See, e.g., Data & Society Comment at 7; Google DeepMind Comment at 3; Global Partners Digital Comment at 15; Hitachi Comment at 10; TechNet Comment at 4; NCTA Comment at 4-5; Centre for Information Policy Leadership (CIPL) Comment at 1; Access Now Comment at 3-5; BSA | The Software Alliance Comment at 12; U.S. Chamber of Commerce Comment at 9 (discussing the need for federal privacy protection); Business Roundtable Comment at 10 (supporting a passage of a federal privacy/consumer data security law to align compliance efforts across the nation); CTIA Comment at 1, 4-7 (declaring that federal privacy legislation is necessary to avoid the current fragmentation); Salesforce Comment at 9 (“The lack of an overarching Federal standard means that the data which powers AI systems could be collected in a way that prevents the development of trusted AI. Further, we believe that any comprehensive federal privacy legislation in the United States should include provisions prohibiting the use of personal data to discriminate on the basis of protected characteristics”).

29 See AI EO at Sec. 2(f)(“Artificial Intelligence is making it easier to extract, re-identify, link, infer, and act on sensitive information about people’s identities, locations, habits, and desires. Artificial Intelligence’s capabilities in these areas can increase the risk that personal data could be exploited and exposed.”); Sec. 9.

30 See The White House, Readout of White House Listening Session on Tech Platform Accountability (Sept. 8, 2022) [hereinafter “Readout of White House Listening Session”].

31 AI EO at Sec. 4.6.

32 National Telecommunications and Information Administration, Dual Use Foundation Artificial Intelligence Models With Widely Available Model Weights, 89 Fed. Reg. 14059 (Feb. 26, 2024).